Arquitetura Reativa do Quarkus

Quarkus is reactive. It’s even more than this: Quarkus unifies reactive and imperative programming. You don’t even have to choose: you can implement reactive components and imperative components then combine them inside the very same application. No need to use different stacks, tooling or APIs; Quarkus bridges both worlds.

This page will explain what we mean by Reactive and how Quarkus enables it. We will also discuss execution and programming models. Finally, we will list the Quarkus extensions offering reactive facets.

What is Reactive?

The Reactive word is overloaded and associated with many concepts such as back-pressure, monads, or event-driven architecture. So, let’s clarify what we mean by Reactive.

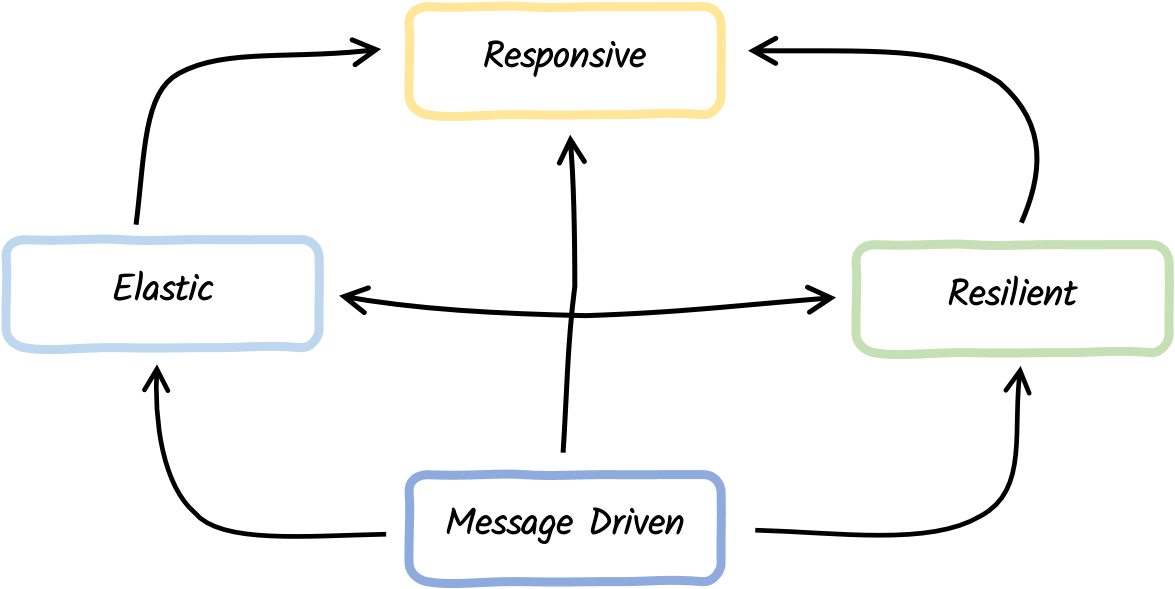

Reactive is a set of principles and guidelines to build responsive distributed systems and applications. The Reactive Manifesto characterizes Reactive Systems as distributed systems having four characteristics:

-

Responsive - they must respond in a timely fashion

-

Elastic - they adapt themselves to the fluctuating load

-

Resilient - they handle failures gracefully

-

Asynchronous message passing - the components of a reactive system interact using messages

In addition to this, the Reactive Principles white paper lists a set of rules and patterns to help the construction of reactive systems.

Reactive Systems and Quarkus

Reactive System is an architectural style that can be summarized by: distributed systems done right. Relying on asynchronous message passing helps enforce the loose coupling (both in terms of space and time) between the different components. You send messages to virtual destinations. The receiver can be located anywhere, or even not yet exist at the time of the message dispatch. The elasticity pillar allows scaling up and down individual components according to the load. Elasticity also provides redundancy, which helps with the resilience pillar. Failures are inevitable. Components forming a reactive system must handle them gracefully, avoid cascading failures, and self-adapt themselves.

A responsive system can continue to handle the request while facing failures and under fluctuating load. Quarkus has been tailored for that. It provides features that will help you design, implement and operate reactive systems.

Reactive Applications

Quarkus is not only going to help you build reactive systems. It’s also going to make sure that each constituent enforces the reactive principles and is highly efficient.

Efficiency is essential, especially in the Cloud and in containerized environments. Resources, such as CPU and memory, are shared among multiple applications. Greedy applications that consume lots of memory are inefficient and put penalties on sibling applications. You may need to request more memory, CPU, or bigger virtual machines. It either increases your monthly Cloud bill or decreases your deployment density.

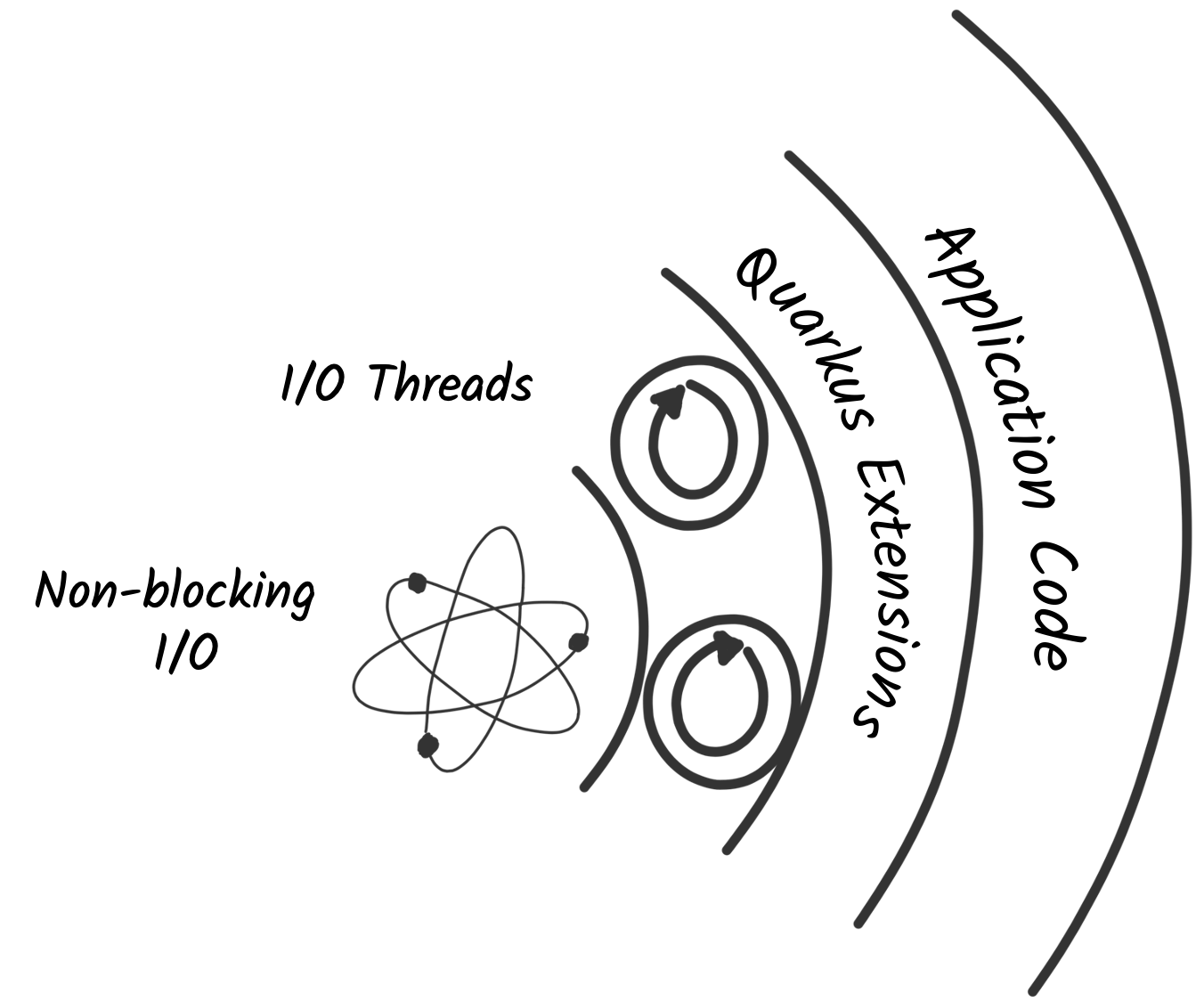

I/O is an essential part of almost any modern system. Whether it is to call a remote service, interact with a database, or send messages to a broker, there are all I/O-based operations. Efficiently handling them is critical to avoid greedy applications. For this reason, Quarkus uses non-blocking I/O, which allows a low number of OS threads to manage many concurrent I/Os. As a result, Quarkus applications allow for higher concurrency, use less memory, and improve the deployment density.

How does Quarkus enable Reactive?

Under the hood, Quarkus has a reactive engine. This engine, powered by Eclipse Vert.x and Netty, handles the non-blocking I/O interactions.

Quarkus extensions and the application code can use this engine to orchestrate I/O interactions, interact with databases, send and receive messages, and so on.

Reactive execution model

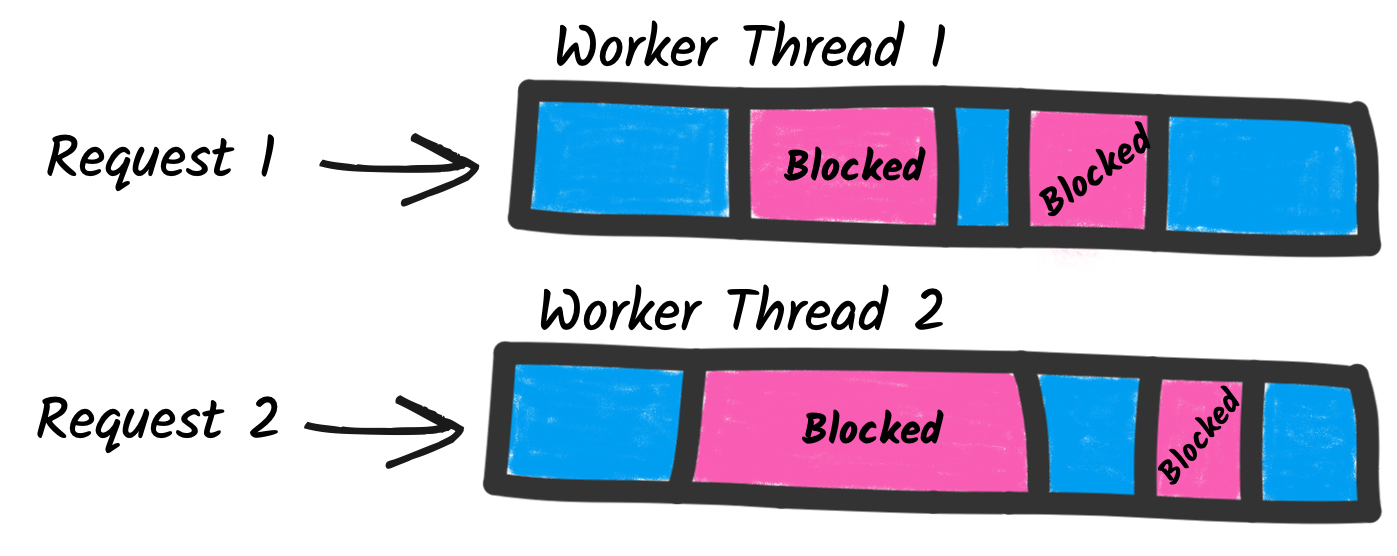

While using non-blocking I/O has tremendous benefits, it does not come for free. Indeed, it introduces a new execution model quite different from the one used by classical frameworks.

Traditional applications use blocking I/O and an imperative (sequential) execution model. So, in an application exposing an HTTP endpoint, each HTTP request is associated with a thread. In general, that thread is going to process the whole request and the thread is tied up serving only that request for the duration of that request. When the processing requires interacting with a remote service, it uses blocking I/O. The thread is blocked, waiting for the result of the I/O. While that model is simple to develop with (as everything is sequential), it has a few drawbacks. To handle concurrent requests, you need multiple threads, so, you need to introduce a worker thread pool. The size of this pool constrains the concurrency of the application. In addition, each thread has a cost in terms of memory and CPU. Large thread pools result in greedy applications.

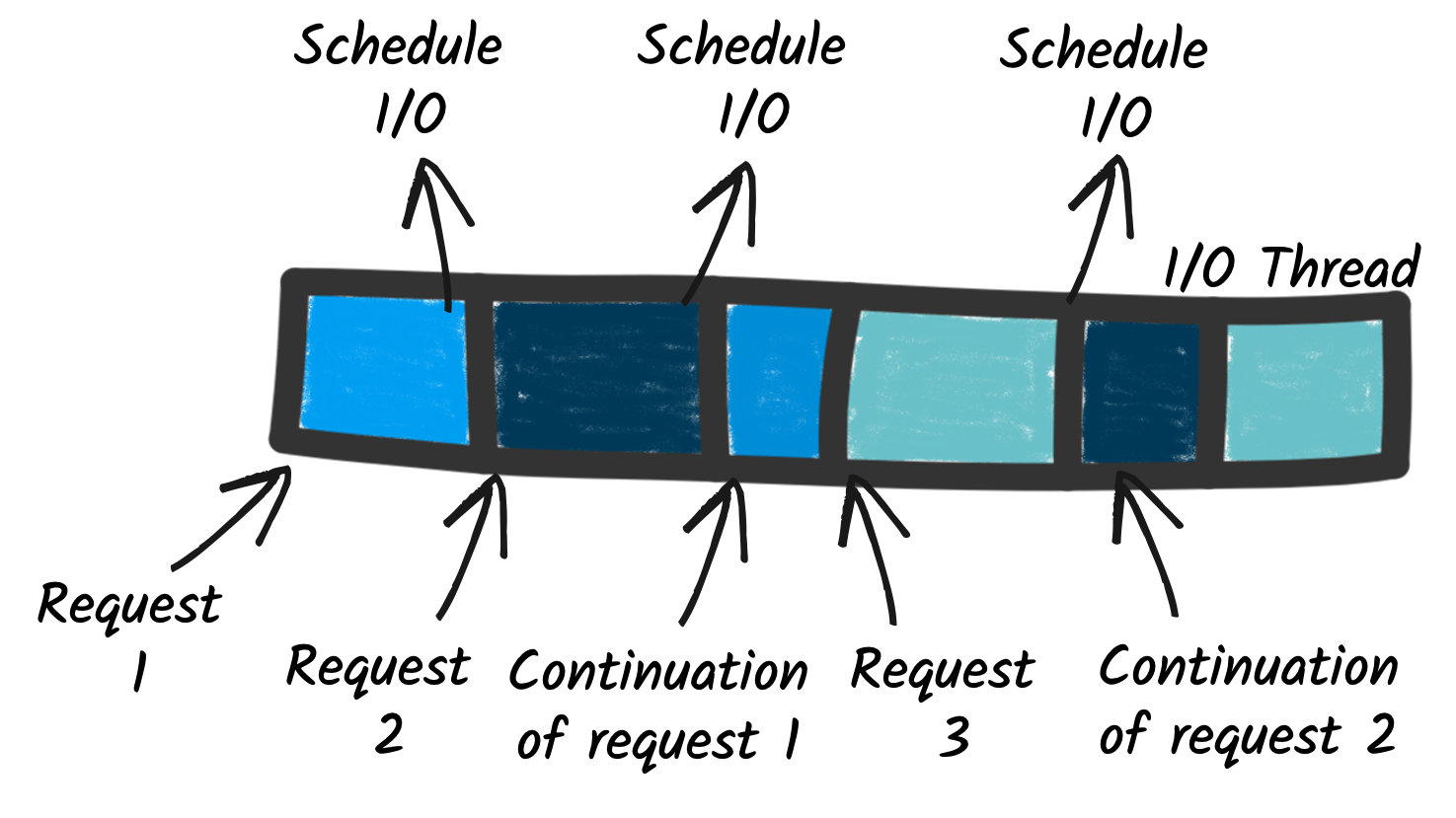

As we have seen above, non-blocking I/O avoids that problem. A few threads can handle many concurrent I/O. If we go back to the HTTP endpoint example, the request processing is executed on one of these I/O threads. Because there are only a few of them, you need to use them wisely. When the request processing needs to call a remote service, you can’t block the thread anymore. You schedule the I/O and pass a continuation, i.e., the code to execute once the I/O completes.

This model is much more efficient, but we need a way to write code to express these continuations.

Reactive Programming Models

The Quarkus architecture, based on non-blocking I/O and message passing, allows multiple supporting reactive development models that are all different in how they express continuations. The two main ways to write reactive code with Quarkus are:

-

Reactive Programming with Mutiny, and

-

Coroutines with Kotlin

First, Mutiny is an intuitive, event-driven reactive programming library. With Mutiny, you write event-driven code. Your code is a pipeline receiving events and processing them. Each stage in your pipeline can be seen as a continuation, as Mutiny invokes them when the upstream part of the pipeline emits an event.

The Mutiny API has been tailored to improve the readability and maintenance of the codebase. Mutiny provides everything you need to orchestrate asynchronous actions, including concurrent execution. It also offers a large set of operators to manipulate individual events and streams of events.

| Find more info about Mutiny and its usage in Quarkus on Mutiny support documentation. |

Co-routines are a way to write asynchronous code sequentially. It suspends the execution of the code during I/O and registers the rest of the code as the continuation. Kotlin coroutines are great when developing in Kotlin and only need to express sequential compositions (chain of co-dependent asynchronous tasks).

Unification of Imperative and Reactive

Changing your development model is not simple. It requires relearning and restructuring code in a non-blocking fashion. Fortunately, you don’t have to do it!

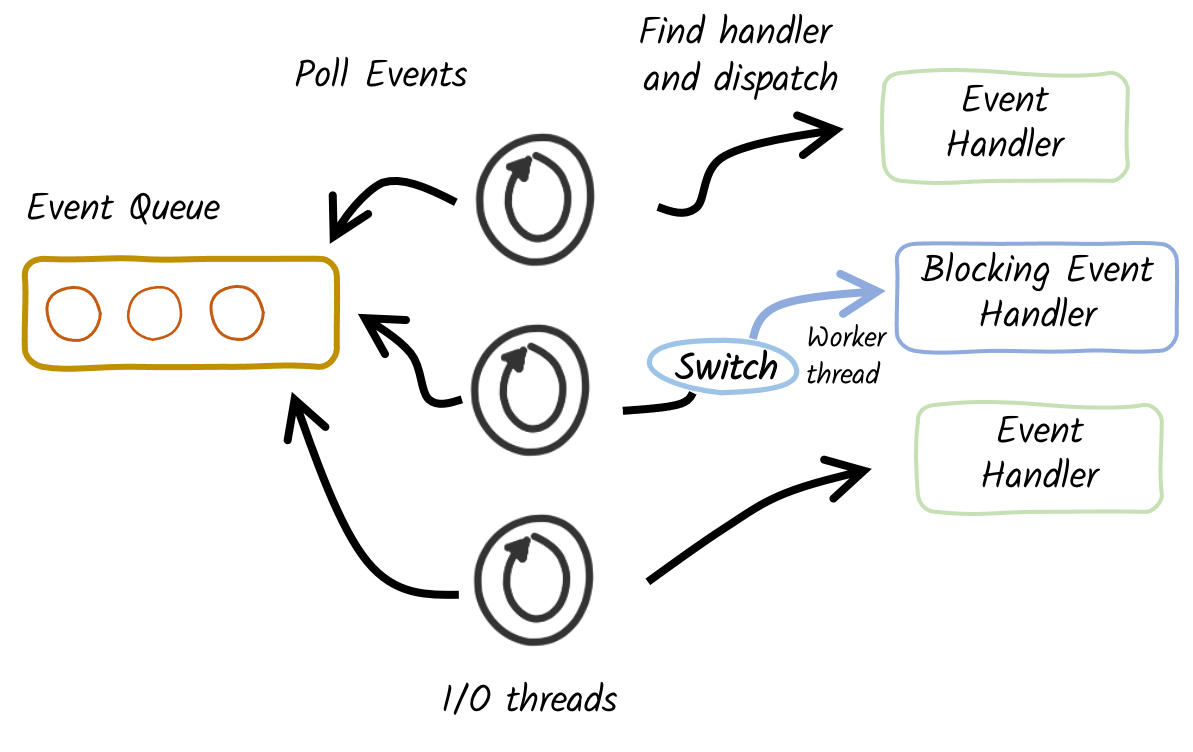

Quarkus is inherently reactive thanks to its reactive engine. But, you, as an application developer, don’t have to write reactive code. Quarkus unifies reactive and imperative. It means that you can write traditional blocking applications on Quarkus. But how do you avoid blocking the I/O threads? Quarkus implements a proactor pattern that switches to worker thread when needed.

Thanks to hints in your code (such as the @Blocking and @NonBlocking annotations), Quarkus extensions can decide when the application logic is blocking or non-blocking.

If we go back to the HTTP endpoint example from above, the HTTP request is always received on an I/O thread.

Then, the extension dispatching that request to your code decides whether to call it on the I/O thread, avoiding thread switches, or on a worker thread.

This decision depends on the extension.

For example, the Quarkus REST (formerly RESTEasy Reactive) extension uses the @Blocking annotation to determine if the method needs to be invoked using a worker thread, or if it can be invoked using the I/O thread.

Quarkus is pragmatic and versatile. You decide how to develop and execute your application. You can use the imperative way, the reactive way, or mix them, using reactive on the parts of the application under high concurrency.

Quarkus Extensions enabling Reactive

Quarkus offers a large set of reactive APIs and features. This section lists the most important, but it’s not an exhaustive list. Quarkus adds new features in every release, and the Quarkiverse proposes many extensions enabling Reactive.

HTTP

-

Quarkus REST: an implementation of Jakarta REST tailored for the Quarkus architecture. It follows a reactive-first approach but allows imperative code using the

@Blockingannotation. -

Reactive Routes: a declarative way to register HTTP routes directly on the Vert.x router used by Quarkus to route HTTP requests to methods.

-

REST Client: allows consuming HTTP endpoints. Under the hood, it uses the non-blocking I/O features from Quarkus.

-

Qute - the Qute template engine exposes a reactive API to render templates in a non-blocking manner.

Dados

-

Hibernate Reactive: a version of Hibernate ORM using asynchronous and non-blocking clients to interact with the database.

-

Hibernate Reactive with Panache: provide active record and repository support on top of Hibernate Reactive.

-

Reactive PostgreSQL client: an asynchronous and non-blocking client interacting with a PostgreSQL database, allowing high concurrency.

-

Reactive MySQL client: an asynchronous and non-blocking client interacting with a MySQL database

-

The MongoDB extension: exposes an imperative and reactive (Mutiny) APIs to interact with MongoDB.

-

Mongo with Panache offers active record support for both the imperative and reactive APIs.

-

The Cassandra extension: exposes an imperative and reactive (Mutiny) APIs to interact with Cassandra

-

The Redis extension: exposes an imperative and reactive (Mutiny) APIs to store and retrieve data from a Redis key-value store.

Event-Driven Architecture

-

Reactive Messaging: allows implementing event-driven applications using reactive and imperative code.

-

Kafka Connector for Reactive Messaging: allows implementing applications consuming and writing Kafka records

-

AMQP 1.0 Connector for Reactive Message: allows implementing applications sending and receiving AMQP messages.

Network Protocols and Utilities

-

gRPC: implement and consume gRPC services. Offer reactive and imperative programming interfaces.

-

GraphQL: implement and query (client) data store using GraphQL. Offers Mutiny APIs and subscriptions as event streams.

-

Fault Tolerance: provide retry, fallback, circuit breakers abilities to your application.It can be used with Mutiny types.

Engine

-

Vert.x : the underlying reactive engine of Quarkus. The extension allows accessing to the managed Vert.x instance, as well as its Mutiny variant (exposing the Vert.x API using Mutiny types)

-

Context Propagation: capture and propagate contextual objects (transaction, principal…) in a reactive pipeline